For your website to rank on Google, or on any other search engine, it needs to first be crawled by that search engine. But how you see your website differs greatly from how Google sees it. That’s why it’s important to understand what elements search engines look at if you’d like to have a website that appears in search results.

So, what does Google see when it crawls your website? When a search engine crawls your website, it reads the on-page content and links, but in the form of HTML code. Additionally, it looks at the title and header tags and meta description of your page. Google also sees images by reading their alt text and looks at schema markups to understand what the website offers.

Want to find out more about how Google sees each of those elements? Read on as we go into more detail further down this blog.

Jump To:

- How Google Sees Your Website

- How Google Reads Your Content

- How Google Sees Your Images and Videos

- How Google Crawls Links

- Elements that Google Sees But We Don’t

- Google Crawling Frequently Asked Questions

How Does Google See My Website?

No matter what theme or CRM your website uses, when you open it, you should be able to see key elements such as your website’s URL, your logo, the navigation bar and the content on your pages – the title, the text content, the images and other media to name a few.

And interestingly enough, Google is able to see that same information! Except, it sees it slightly differently.

Regardless of how many pages you’ve got on your website, Google can inspect them all with the help of Googlebot – Google’s very own web crawler. Googlebot comes across billions of pages and sites every day, but for it to see your website, especially if it has a lot of pages, it’s recommended you have a sitemap.

First Point of Contact of Google and Your Website

When Googlebot arrives on your website, the first thing it will read is your sitemap – a robots.txt file that contains all the pages of your website that you want Google to crawl and additional information about other files on your website.

Think of a sitemap as a guide; you show Google which pages and files on your website are important so that once Googlebot arrives on your website, it knows where to go next.

Wildcat Top Tip: if your website is on WordPress, you can use the Yoast plugin, which will automatically generate it for you. Then, you can easily submit it to Google Search Console. Need help with this? Read more about building and submitting sitemaps on Google Search Central.

Once Googlebot discovers your sitemap, it will move on to read your pages.

How Does Google Read My Content?

Google will read every single word that’s on your page – from the page titles in the navigation menu, through the page content, to the text in the footer. However, while you can see the different fonts and text sizes, Google will see it all in code.

Header Tags and Paragraph Text

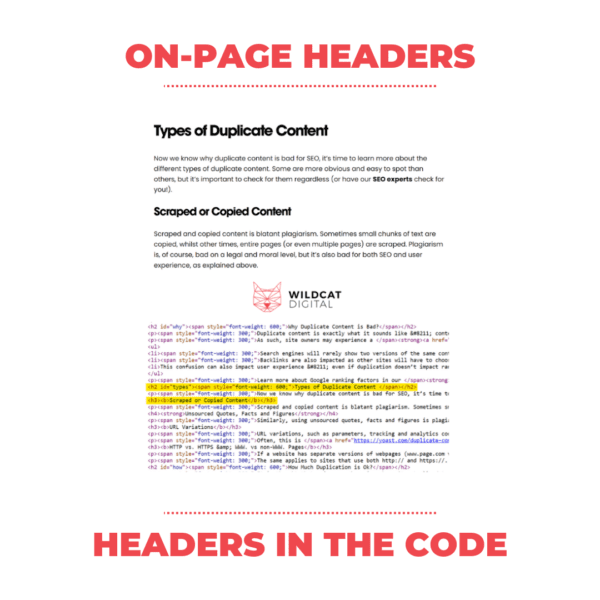

Alongside paragraphs with text, your pages are likely to have a title and subheadings to help page visitors navigate easier through the page’s content. Those subheadings are likely to be tagged as header tags in the page code.

Google pays extra attention to the header tags. To differentiate them from the paragraph text on the page, they will be marked differently in the HTML code.

Top Tips for Structuring Your Page Content

- Make sure you have only one H1 on each page and make it unique. Out of the entire on-page content, this sends the strongest signal to Google as to what the page is about.

- Use H2s, H3s, H4s etc. to mark subheadings. This helps improve user experience and Google likes to reward user-friendly content.

- Break up huge blocks of text. Long paragraphs make the text trickier to follow and digest. Google understands this, so it’s recommended to use images, bullet points, lists and other prompts that will help you improve the readability of your pages.

How Does Google Crawl Images and Videos?

Alt text is what helps Google understand what’s featured in an image. As Googlebot can’t see what an image looks like, it reads its Alt text, which should describe what’s included in it.

When it comes to videos, you can help Google index them by providing metadata about each video, including its title, description, length, thumbnail image and upload date.

How Does Google Crawl Links?

Googlebot crawls links in the same way it crawls content: it sees them in the HTML code. However, for Google to crawl your links, they need to have a <a> tag and an ‘href’ attribute. If they don’t follow this format, Googlebot wouldn’t be able to follow them.

Are There Elements That Google Sees But We Don’t?

Yes, there are certain page elements that you wouldn’t be able to see on-page without referring to the page code or using a tool to inspect your page. These include:

- Backlinks – while you can’t see how many external websites link to your pages by simply looking at your website, Google not only sees that number but also takes it as one of the strongest factors for ranking your website.

- Schema markup – (also known as structured data): you can’t see whether a page has Organisation, Product, Article, FAQ or any other type of structured data without looking at the code or using a tool such as Schema.org to inspect the website.

- Follow or No-Follow links – Google sees whether a link in your content has a rel=”follow” or a rel=”nofollow” tag in the HTML; something you can’t see by simply looking at the page. You can learn more about follow and no-follow links in this insightful Ahrefs article on links.

These elements, alongside title tags, meta description, user engagement and page mobile friendliness are just a few of the many elements Google can see, unlike us, and considers when deciding where to rank your website.

Google Crawlability FAQs

How Do I Get Google to Crawl My Site Faster?

You can get Google to crawl your website more often by removing low-quality pages from your website, especially if you have a large website. Google can crawl only so many pages at a time, so removing ones that don’t bring value will work in favour of your quality pages.

In addition, adding fresh content regularly, building a good internal links structure and reducing the number of technical errors can also help Google crawl your website more often.

How Often Does Google Crawl Websites?

This would depend on the size of your website and how often you update it, but in general, Google would crawl your website every 2-3 days to 2-3 weeks. Something to bear in mind though is that Googlebot crawls URLs with a different frequency. For example, it might crawl some URLs every 5-6 days, while others – every 5-6 months.

How Can I Check if Google Has Crawled My Website?

Use Google Search Console’s inspection tool which shows on which date a page was last crawled and indexed. If you find that a page on your website hasn’t been indexed, which is often the case for newly-created pages, you can request indexing manually.

Do You Need Help Making Your Website More Visible on Google?

You’re in the right place. We’ve helped a wide range of different businesses punch above their weight online with tailored SEO services. Contact us today for a free consultation.