With the vast number of active websites that are available at the fingertips of the modern-day internet user, it is vital that a search engine is able to display only the most relevant and important information related to a query.

So how can search engines possibly hope to display relevant information to their users when there are so many web pages?

The answer involves the processes of crawling and indexing, a fundamental part of how search engines work, and equally important when considering search engine optimisation (SEO). So, what exactly is crawling and indexing?

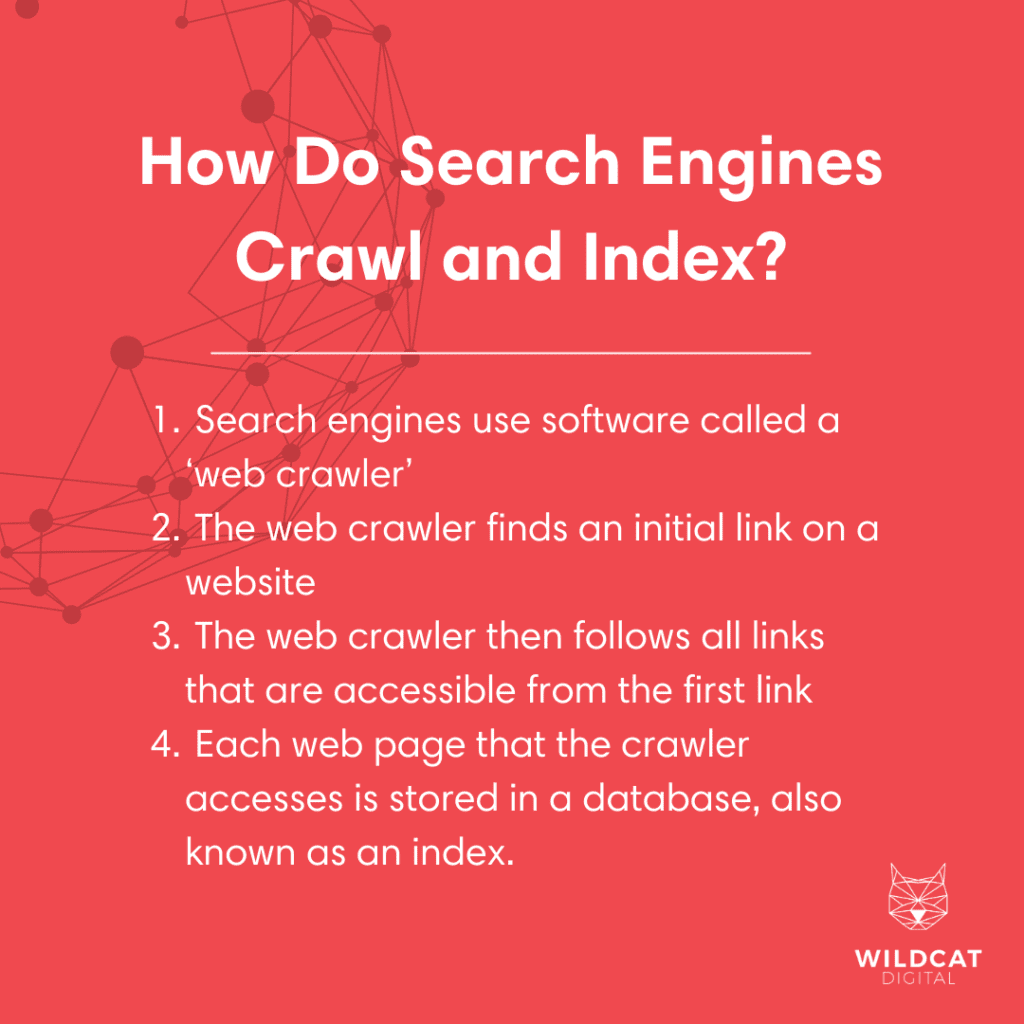

Crawling and indexing in SEO describes the process, carried out by search engines, of finding and storing information held on websites. Search engines use software called a ‘web crawler’ to find web pages via links. This information is then stored in a database, or ‘index’. When a user performs a search, the search engine reads from the index to provide the user with web pages relevant to the search query.

Jump to:

- What is Crawling in SEO?

- What is Indexing in SEO?

- How Search Engines Work – Crawling and Indexing

- Can You Improve the Crawling and Indexing on Your Site?

What is Crawling in SEO?

Using software known as a ‘web crawler’ (sometimes referred to as a web spider!), the entire world wide web may be searched in a complex and automated process. This method of data gathering allows search engines to cover vast quantities of web pages one link at a time (this is why internal linking is so important in SEO!).

The web crawler finds an initial link contained within a page, and then seeks to identify all the links that are accessible by that initial link. This process repeats, and results in the collection of many billions of web pages. These pages are stored in a huge database, or ‘index’.

What is Indexing in SEO?

When you search the world wide web using a search engine like Google, you are actually searching the index of sites that a web crawler has collected. Google estimates that each search performed using their engine involves the use of around 500 servers to ensure that a search is carried out efficiently, and that only the most relevant and important information is shown to a user.

Important: If your web pages aren’t indexed, they can’t be found on a search engine!

How Search Engines Work – Crawling and Indexing

Crawling and indexing becomes a key part of how search engines work, and how they are able to consistently show the most relevant information when users search their index. To ensure that your website appears as the most relevant result for a user’s query, your website (and all relevant pages) must be crawlable and indexable.

If you do not want your website to be visible to a web crawler, you can add a ‘noindex tag’, which is an on-page directive that instructs search engines not to add your website to their index. Likewise, if you can’t work out why a page isn’t ranking, it could be a good idea to check that it doesn’t have a ‘noindex’ tag.

How Important is Crawling and Indexing for SEO?

When it comes to SEO, crawling and indexing is a vital consideration for web developers wishing to perform well in search result rankings.

Websites that are not optimised for search engines to crawl and index their information will achieve lower search rankings than those that are, which will result in lower numbers of people visiting a website. This is because search engine crawlers literally can’t find pages to rank, which is why internal linking is so important.

Can You Improve the Crawling and Indexing on Your Site?

In short: yes, you can! It is important that a web crawler is able to crawl through your site, and not just to it. You should therefore consider the tips below to ensure that your site is optimised for a search engine’s web crawler software. It is important that a web crawler is able to crawl your entire site, and not just part of it.

How to Improve Crawlability

- Don’t rely on non-text content such as images or videos to be indexed

- Ensure that there is no page without a link to it – internal linking it vital for crawlability

- Implement an up-to-date ‘sitemap’

How to Improve Indexing

- Ensure that key pages are not tagged as “NoIndex”

- Make sure your site is full of high-quality, engaging content

- Ensure you are not ‘Keyword Stuffing’

- Try to limit the amount of ‘hidden text’ used throughout your site

Get Help From the SEO Experts at Wildcat Digital

Our expert team is on hand to help with your unique SEO requirements! Take a look at our full range of SEO services, and be sure to fill out a contact form on our website and we will get back to you as soon as possible. Alternatively, why not check out our Knowledge Base hub pages for more answers to your SEO questions?